Insights From The Blog

Unity MARS – What’s New?

The Unity Mixed and Augmented Reality Studio (catchily known as MARS) is a useful extension that adds functionality to support augmented and mixed reality content creation within the Unity engine. The MARS package and the set of companion apps associated with it provide the ability to address real-world objects and events as GameObjects. MARS helps build AR apps that are context-aware and responsive to physical space, and is a powerful tool for the creation of Unity content.

Unity understands the value of the MARS system and periodically updates it with new and enhanced features to make it even more relevant, just as they have recently done. Based on the premise that upgrades are usually a good thing, what is new – or at least augmented – in the latest version of MARS, and how will it help you create the best content. Among the features that have been upgraded in the latest release are:

Content Manager. Used primarily for giving users the product-centric view that bases content available in GitHub, forums, and the package manager in one place so that it is easy to find and organise any content that you may want to include. Though still only regarded as a beta solution, the content manager is already a one-stop shop for all of your resources and samples. It is a tool that is already useful and growing in stature, so if you don’t regularly use it, you should start to.

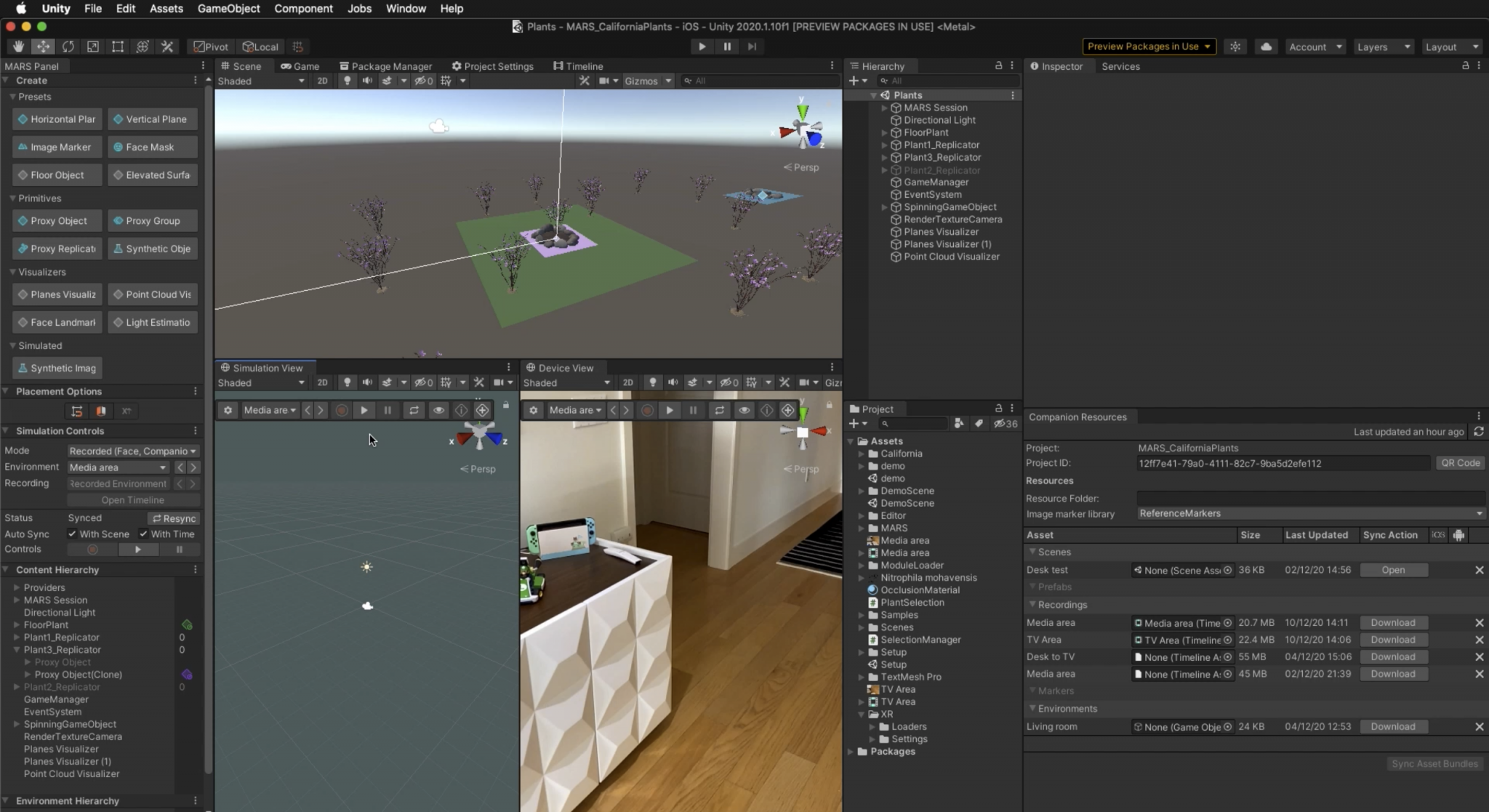

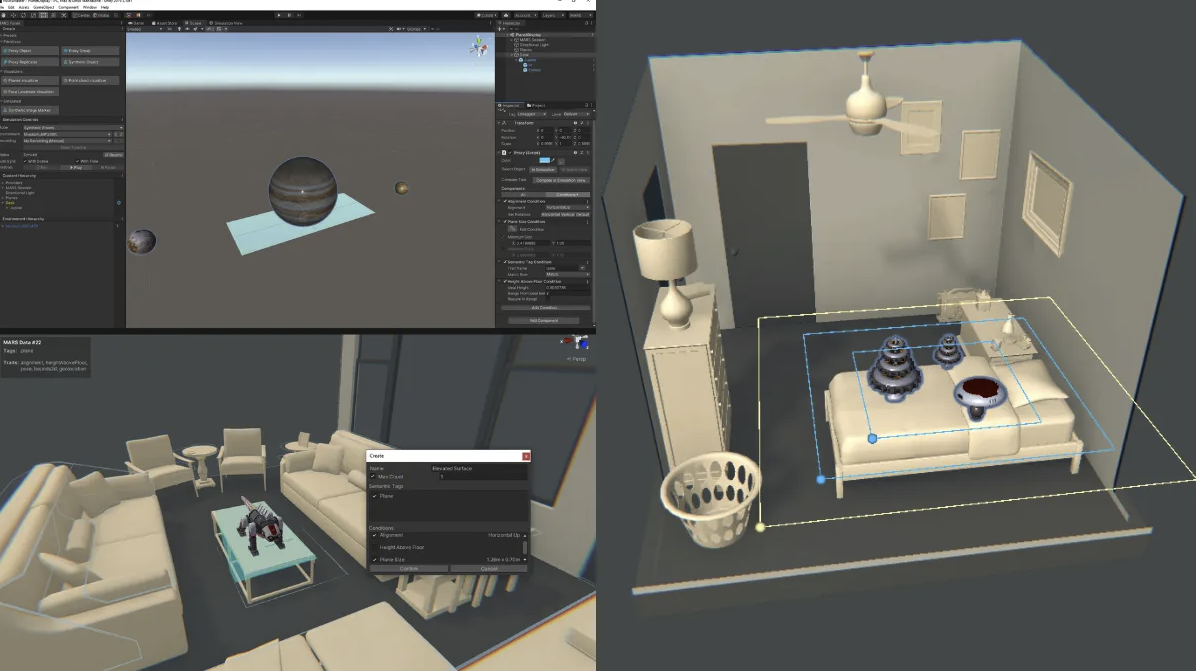

Simulation as a Subsystem. Now one of the most popular features in Unity, the simulation system speeds up the iteration cycles in your AR builds. The ability to simulate solves the problem of iteration time by enabling AR testing within the Unity Editor to preview how an AR device would work with its physical surroundings. That not only saves the developer a huge amount of time, but gives a realistic representation of your design intent by allowing you to test against a variety of environments that either imitate or come from the real world. Such simulation is very helpful during development and means you can continue your development with the confidence that it will work properly when finally compiled and released.

As a system, the simulation sub-structure can be broken down into two core functionalities that are conceptually separate, but work together to drastically reduce iteration time. These are:

- Preview the execution of a scene in isolation in Edit Mode.

- Set up an environment scene in the Editor, and provide AR data based on that environment.

In addition, the synthetic data used in simulation is artificially generated, rather than generated from real-world events. This data comes from a prefab that is instantiated in the environment scene and the Unity MARS system provides a set of default environment prefabs that includes rooms, buildings, and outdoor spaces. Furthermore, you can also create your own simulated environments too and add them to the content manager.

Body Tracking. One of the most exciting features of MARS is the body tracking element that now supports human avatar rigs and body poses defined by Mecanim – Unity’s rich and sophisticated animation system. Unity MARS Simulation also supports synthetic human avatars that can be rigged and animated to create example environments for testing your experience. All of this is powered through the AR Foundation. This is a powerful feature that creates the ability to virtually try on clothes, wear digital costumes, and run inexpensive motion capture directly from a mobile device. Body tracking is an increasingly robust aspect of the MARS system that will give increasingly lifelike movements to your avatars.

Every new iteration of MARS introduces new and upgraded tools that are designed to increase your productivity while making the whole process easier and more intuitive.